Responsible AI

We work to advance AI that is safe, ethical, multilingual, and universally accessible, empowering organizations to maximize impact and meaningfully serve humanity.

Missions That Matter

Our AI Services

We help organizations across sectors identify and manage AI risks, strengthen human and technical safeguards, and build culturally attuned, workforce‑ready AI solutions.

→ Responsible AI and Technical AI Safety

→ Novel and Multi-Lingual Training Data Curation

→ AI Workforce Readiness and Adoption

Leading

AI Labs

Trust Your AI

Testing & Evaluation

Trust that you understand your model's behavior

- Red-team systems to find failures

- Benchmark models at scale (e.g, implementation in Inspect)

- Quantify real-world impact through uplift

- Enable repeatable evaluations

Guardrails + Safeguards

Trust that your safeguards work

- Optimize training data and fine-tuning

- Test safeguards in production

- Filter hazardous data (do-not-train)

- Monitor deployed systems

Ecosystem Risk Research Policy + Governance

Trust that you have clarity on all of the risks

- Identify emerging AI threats

- Emulate real threat actors

- Deliver multilingual risk intelligence (380+ languages)

- Illuminate misuse and autonomy risks

- Design responsible AI governance

- Set deployment thresholds and safety criteria

- Translate risk into actionable playbooks

Model + Tool Provisioning

Trust that you have the right systems to advance your mission

- Centralize testing across 200+ LLM

- Orchestrate multimodal and agentic evaluations

- Challenge systems through advanced digital twins

Multi-Lingual + Niche Data Curation

Trust that your data unlocks your model's full potential

- Work in 380+ languages

- Scale to languages with over half a billion speakers

- Reach users of languages with as few as 10,000 speakers

- Localization & Diverse Expertise

- Design bespoke datasets to help your technology, marketing, or products land

- Engage a network of academic experts in any domain to customize your training data

Human-Centered AI Fluency

Trust that your teams are ready to unleash AI

- Set a standard for AI excellence and usage in your org

- Unlock new tools to advance your mission

- Leadership + decision readiness (e.g., red-teaming failure modes)

- Lead teams through AI-driven change

- Set guardrails for responsible use

- Decide where AI adds value

Click for Details

We design responsible AI for humans, no matter...

Responsible AI Case Studies

-

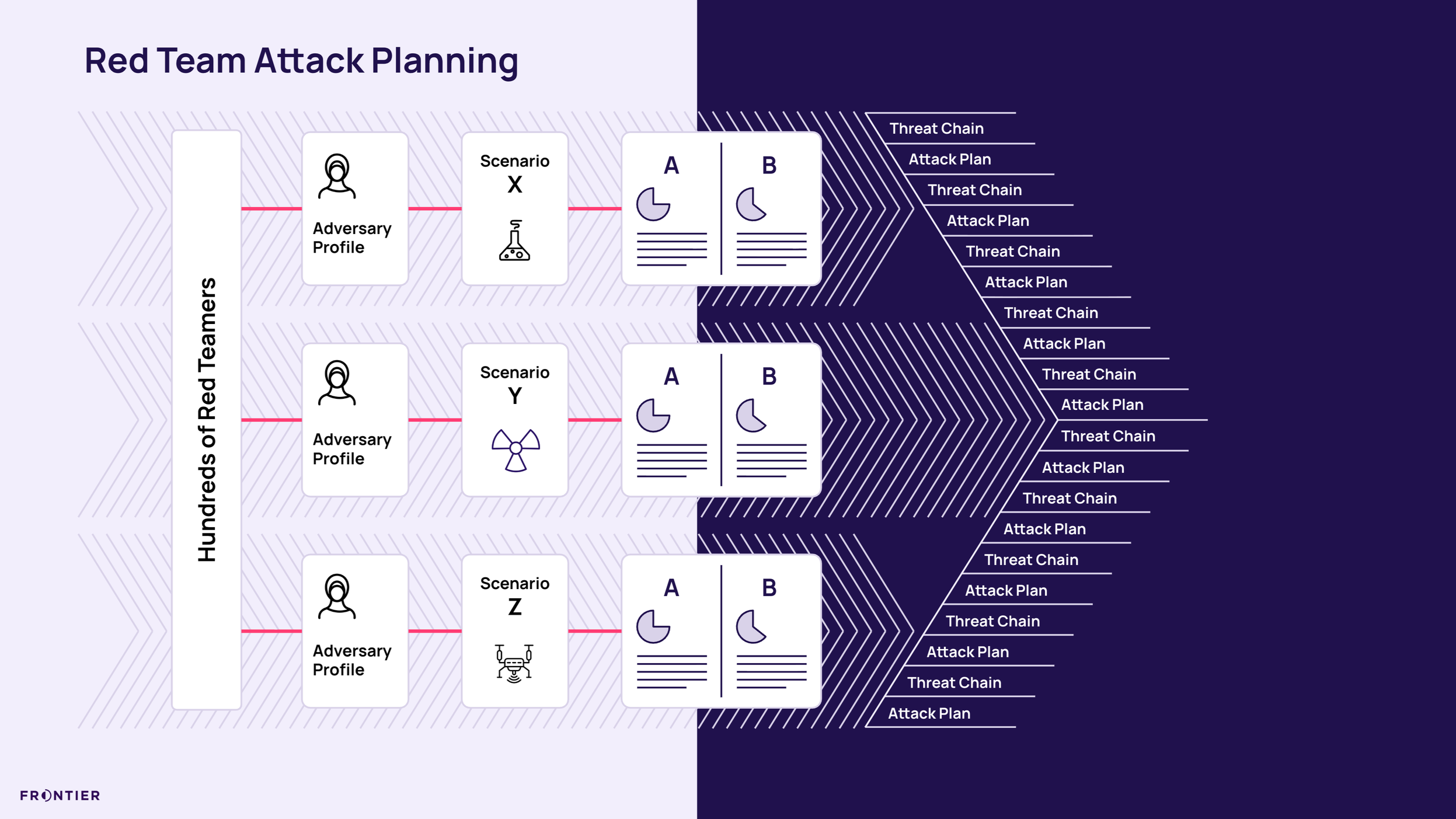

Thinking Like the Enemy

Quantifying AI and Biological Risk

The AI Safety Fund engaged Frontier to design and implement a novel safety benchmark examining how large language models could elevate biological risk. Drawing on scientists, former defense experts, and creative red-teamers, Frontier built a rigorous evaluation framework that tests real-world misuse scenarios and informs model safety decisions. -

Frontier's Secure Red-Teaming Playground

In 2024, Frontier built a secure execution platform to conduct large-scale AI red-teaming with speed and operational control. The system enables eligibility screening, blind testing across major models, secure workflows, and rapid deployment of hundreds of participants, strengthening safety evaluations without compromising confidentiality.

-

AI Readiness at Scale

Designing Responsible Adoption Across A Distributed Workforce

When a U.S. federal agency rolled out an enterprise AI platform, it partnered with Frontier to ensure adoption was disciplined and mission-aligned. We designed a scalable enablement architecture that embedded responsible use, real-world practice, and measurable skill-building across a distributed workforce.

Responsible AI News

Meet Your Responsible AI Team

-

Christopher Cook

Senior Director

-

Steve Sheamer

Senior Director

-

Robin Vande Werken

Principal Advisor

-

Julia Lang

Principal Advisor

-

Michael Bailey

Principal Designer

-

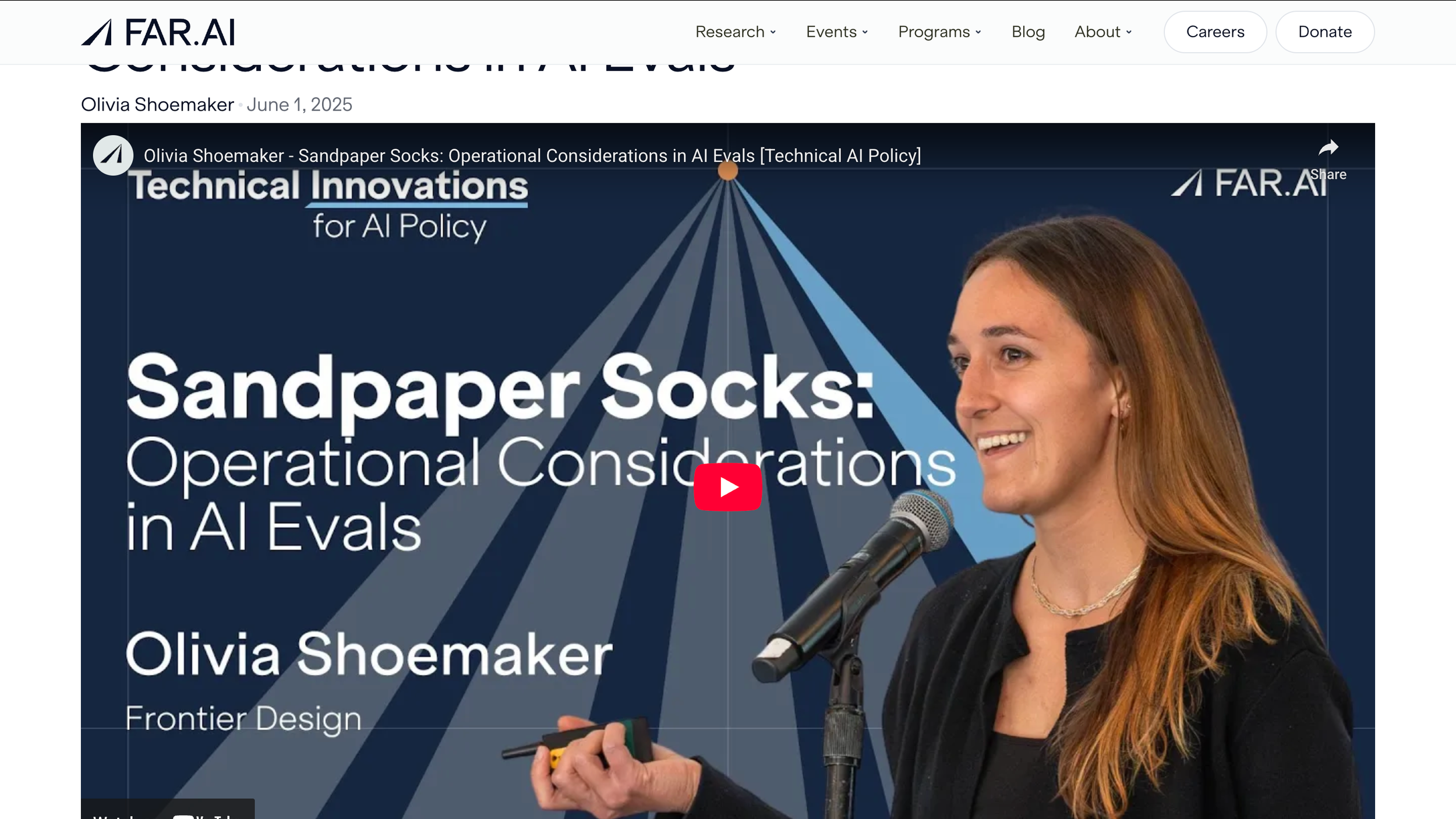

Olivia Shoemaker

Lead Advisor

-

Helen Keshishian

Senior Advisor

-

Colin SyBing

Senior Advisor